Medical AI Outperforms Doctors: What the Harvard Study Really Tells Us

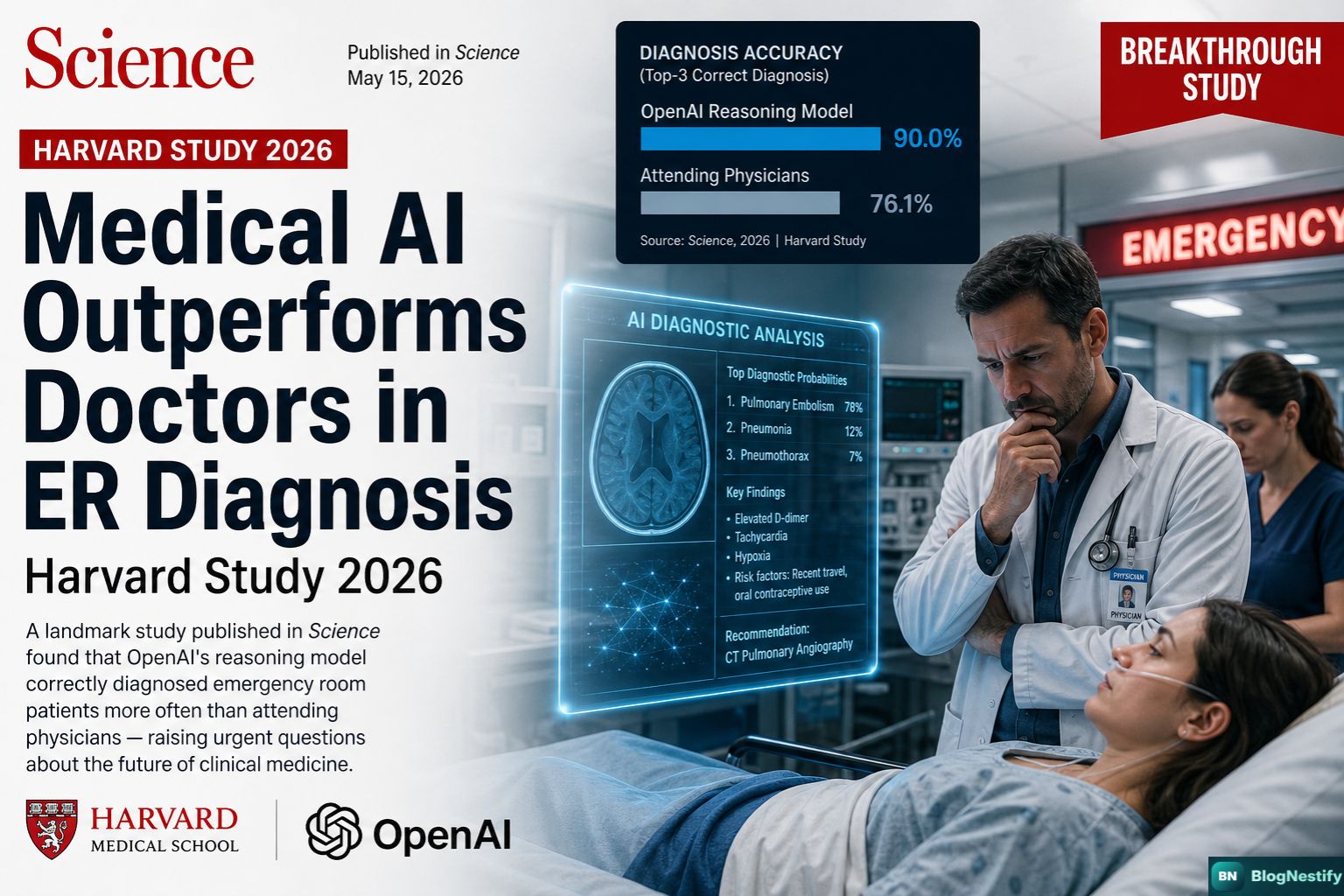

A landmark study published in Science found that OpenAI's reasoning model correctly diagnosed emergency room patients more often than attending physicians — raising urgent questions about the future of clinical medicine.

A patient walks into a Boston emergency room, short of breath, their blood pressure dipping and rising unpredictably. The attending physicians stabilize the condition — only to watch it worsen hours later. The medication, they suspect, is failing. What they don't see — but an artificial intelligence does — is a buried clue in the electronic health record: a possible history of lupus, an autoimmune condition that could be silently inflaming the heart.

That real patient, treated at Beth Israel Deaconess Medical Center in Boston, is now at the center of a story reshaping how medicine understands its own future. And the AI, as it turns out, was right.

The Study That Changed the Conversation

On April 30, 2026, researchers at Harvard Medical School and Beth Israel Deaconess Medical Center — with contributions from Stanford University — published a study in the prestigious journal Science that many in the medical community called "the clearest demonstration yet" of what AI can do inside a real hospital.

The study put OpenAI's o1 reasoning model through six separate, rigorous experiments — each one pitting the AI against groups of doctors ranging from residents to senior specialists. In every single experiment, without exception, the AI came out ahead.

- Published in Science journal, April 30, 2026

- Led by Harvard Medical School & Beth Israel Deaconess Medical Center

- Co-researchers from Stanford University

- AI tested: OpenAI's o1-preview (chain-of-thought reasoning model)

- 76 real ER cases from Beth Israel used for the primary experiment

- Six separate experiments run; AI outperformed humans in all six

How the Tests Actually Worked

The most significant experiment — the one that most closely mirrors what actually happens in clinical practice — involved 76 real patients who arrived at the Beth Israel emergency department between 2021 and 2024. Both the AI model and two specialist physicians received identical data: electronic health records, vital signs, and a few sentences written by the intake nurse describing why the patient came in.

Two additional doctors, who had no idea whether they were reviewing an AI's answer or a human physician's, then evaluated every diagnosis independently. The AI landed on the correct or very-close diagnosis in 67.1% of cases. The two attending physicians scored 55.3% and 50% respectively — a gap that, in a real emergency room, could mean the difference between a patient going home or being admitted to intensive care.

The model outperformed our very large physician baseline. These systems are no longer just passing medical exams or solving artificial test cases.

— Raj Manrai, Assistant Professor of Biomedical Informatics, Harvard Medical SchoolThe AI's advantage was even more pronounced in rare and complex cases — the category where diagnostic errors carry the highest cost. Across 143 NEJM case reports spanning 2021 to 2024, the model identified the correct diagnosis in 78.3% of cases. When the threshold was broadened to include "very close" answers, that figure rose to 97.9%.

The Numbers vs. the Narrative

It would be easy to read these findings and imagine hospitals replacing their staff with servers. That's not what the data says — and it's explicitly not what the researchers say either. But understanding the nuance requires taking the numbers seriously first.

| Test Scenario | AI (o1) | Human Doctors |

|---|---|---|

| ER Triage (76 real cases) | 67.1% accuracy | 50–55.3% accuracy |

| With more patient detail available | ~82% accuracy | 70–79% accuracy |

| NEJM complex case reports (143 cases) | 78.3% exact / 97.9% near-exact | Physician baseline (lower) |

| Head-to-head vs GPT-4 (70 cases) | 88.6% accuracy | GPT-4: 72.9% |

| Did it sound like a human doctor? | 83.6–94.4% indistinguishable | — |

That last row deserves a pause. The two doctors asked to guess whether each diagnosis was written by a human or an AI couldn't tell the difference in 83.6% and 94.4% of cases. The AI wasn't just more accurate — it reasoned the way a doctor thinks and wrote the way a doctor speaks.

Why This Model Is Different From What Came Before

Earlier large language models — the kind of AI that was stumbling through medical licensing exams just a few years ago — had a specific weakness: they struggled to handle uncertainty and failed to produce structured differential diagnoses (the ranked list of what might be wrong with a patient). OpenAI's o1 model belongs to a newer generation called "chain-of-thought" reasoning models. Instead of jumping to an answer, it works through the problem step by step, weighing evidence before arriving at a conclusion.

That's not just a technical upgrade. In medicine, the process of reasoning matters as much as the conclusion. A doctor who gets the right answer by guessing isn't safe; a doctor who gets the right answer by methodically ruling out alternatives is. The o1 model, in the words of Harvard doctoral student Thomas Buckley, appears to be "approaching optimal diagnosis" on the kinds of complex cases that have been used to test diagnostic computer systems since 1959.

Unlike traditional language models that predict the next word in a sentence, chain-of-thought reasoning models like OpenAI's o1 are trained to reason through multi-step problems before arriving at an answer — similar to how a doctor considers, eliminates, and ranks possible diagnoses before committing to one.

Where AI Is Genuinely Useful — and Where It Falls Short

The study was careful to draw a clear boundary between what AI does well and what it currently cannot do. The o1 model processed only text-based electronic health records. It saw no X-rays, heard no lung sounds, observed no patient demeanor. In real clinical care, those inputs aren't optional — they're foundational. A physician who sees a patient wince when pressing on their abdomen has information no EHR can capture.

Where the AI clearly excels, however, is in the first, information-sparse minutes of care — the critical window during early triage when a doctor has only vitals, demographics, and a brief nurse's note to work with. That is precisely the scenario where the AI's advantage was most pronounced, and it's also the scenario where errors are most costly and most common.

- Early triage with limited patient information

- Rare and complex disease identification

- Second-opinion support for uncertain or borderline cases

- Passive background monitoring of electronic health records for missed diagnoses

- Standardized diagnostic reasoning across high-volume departments

- Cannot process medical images, audio, or nonverbal patient cues

- Retrospective study — not yet validated in live prospective trials

- Not equipped for final treatment decisions requiring moral and contextual judgment

- Real-world deployment requires regulatory approval and clinical validation

- Risk of over-reliance if clinicians treat AI output as definitive

What Doctors and Researchers Are Actually Saying

The reaction from the medical community has been measured but unmistakably significant. Harvard Medical School researcher Arya Rao was quick to note that clinical reasoning is not the same as moral reasoning — a point that matters deeply when treatment decisions involve end-of-life care, patient autonomy, or conflicting family interests.

Arjun Manrai described the shift as "profound," predicting that medicine will increasingly operate as a collaboration between human clinicians and AI systems — each covering the ground the other struggles with most. Dr. Adam Rodman of Harvard, who leads AI integration at Beth Israel Deaconess, put it plainly: "It works with the messy data of a real emergency room. It works for real-world diagnosis."

That last sentence is not throwaway. For years, critics of medical AI argued that lab benchmarks and curated test sets told us nothing about how these systems perform with the chaotic, incomplete, contradictory data that fills real hospital records. This study confronted that criticism directly — and the AI held up.

The Bigger Picture: Medicine at a Crossroads

The Harvard study didn't arrive in a vacuum. By 2025 — already a lifetime ago in this space — roughly 1 in 5 doctors and nurses globally were already consulting large language models for second opinions on difficult cases. More than half said they wanted to use AI that way. The question was never really whether AI would enter the clinic. It already had. The question is how hospitals now choose to channel it.

The research team outlined three near-term use cases most likely to produce real-world benefit: passive background monitoring of electronic health records to flag potential diagnostic errors before they become patient harm; real-time triage support during those first sparse minutes of care; and second-opinion consulting for rare, complex, or uncertain cases. What they did not endorse — not yet, and not without rigorous prospective trials — is fully autonomous AI clinical decision-making.

Professor Ewen Harrison, Co-Director of the University of Edinburgh's Centre for Medical Informatics, described the study's significance as a threshold moment: these systems have moved beyond passing exams and solving textbook cases. They are now operating on real patients, with real records, under real clinical uncertainty — and outperforming the humans who trained for years to do exactly that.

What This Means for Patients

If you are a patient, the near-term implication is not that a machine will be diagnosing you instead of your doctor. It's that your doctor may soon have a tireless, pattern-recognizing assistant quietly reading your records in the background — one that doesn't get fatigued at the end of a 12-hour shift, doesn't anchor on the most recent case it saw, and can surface a possibility even the most experienced clinician might miss.

That is genuinely good news. Diagnostic errors — missed diagnoses, delayed diagnoses, wrong diagnoses — affect an estimated 12 million Americans every year. They are the leading cause of serious, preventable patient harm. Anything that reliably reduces that number deserves serious attention, regardless of how it challenges our assumptions about who and what medicine is.

The machine learned to think like a doctor. Now medicine has to decide what to do with that.

Frequently Asked Questions

The study, published in Science in April 2026, found that OpenAI's o1 reasoning model achieved 67.1% diagnostic accuracy in real emergency room triage — outperforming two attending physicians who scored 55.3% and 50%. The AI outperformed doctor baselines across all six experiments conducted.

The study used OpenAI's o1 (o1-preview) model — a chain-of-thought reasoning model that reasons step by step before arriving at conclusions. This makes it fundamentally different from earlier large language models that would jump directly to an output.

No — and the researchers were explicit about this. The AI processed only text records and had no access to medical images, physical examination findings, sounds, or nonverbal patient cues. Human doctors remain essential for final treatment decisions, patient communication, ethical judgment, and all aspects of care that go beyond text-based pattern recognition.

Key limitations include: the AI used text-only data; no medical images or clinical exam findings were included; the study was retrospective rather than a live clinical trial; real-world deployment would require prospective validation and regulatory approval; and the risk of clinician over-reliance on AI output must be managed carefully.

The study was published in the journal Science on April 30, 2026. It was led by researchers at Harvard Medical School and Beth Israel Deaconess Medical Center in Boston, with collaboration from Stanford University researchers.

The most promising near-term applications include: passive background monitoring of EHR records to flag missed diagnoses; real-time triage support during the first minutes of care when information is sparse; and second-opinion consulting for rare or uncertain cases. The researchers called for controlled prospective clinical trials before broader deployment.

0 Comments

Leave a Comment