The $700 Billion AI Bet2026

What Amazon, Google, Microsoft & Meta Are Actually Building

2026 Update: This article reflects figures and context as of May 2026. Spending commitments have grown from the 2025 announcements — all four companies have reaffirmed or increased their AI infrastructure budgets heading into the second half of this year.

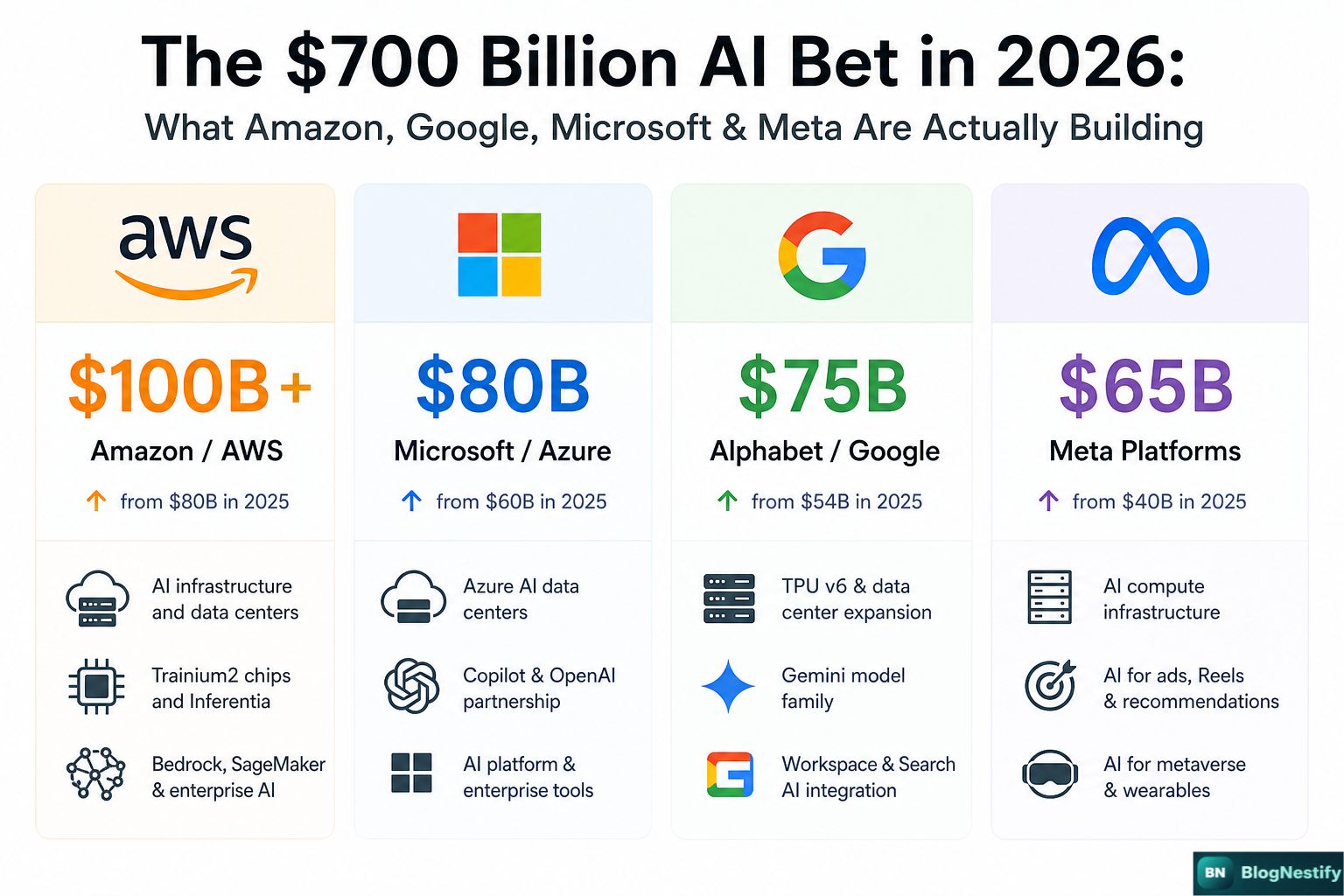

Seven hundred billion dollars. Committed. Not projected, not hoped for — committed. In the first four months of 2026, Amazon, Alphabet, Microsoft, and Meta have each reaffirmed or expanded their AI infrastructure budgets. Stack the numbers up and you're looking at over $700 billion flowing into data centers, GPU clusters, custom chips, and energy capacity this year alone. That's bigger than the GDP of Poland, and it's being spent on the pipes — not the products.

I've been watching this story since the original announcements came out, and what's changed most in 2026 isn't the scale. It's the tone. A year ago, this spending felt aspirational. Companies were betting on demand they hoped would materialize. Today, the enterprise AI market is real enough that CFOs can point to actual revenue lines. The bets are paying off — unevenly, and not yet at a pace that justifies $700B, but enough to keep the spending going.

What Changed Between 2025 and Today

The headline number is bigger, but the story underneath has shifted in three meaningful ways.

Who's Spending What — and What's New in 2026

The strategy behind each company's spending has sharpened considerably from where it stood 12 months ago.

"In 2025, this was a bet. In 2026, it's still a bet — just one with a few winning hands already on the table."

What $700 Billion Buys in 2026

The supply chain behind modern AI infrastructure has gotten somewhat easier to source than it was in 2024 — but not by much. NVIDIA's Blackwell GPUs are in high demand and allocation is still competitive. Custom silicon from all four companies is maturing but not yet at the point where any of them can fully replace third-party GPUs.

The 2026 AI Infrastructure Stack

- ▸GPU & Custom Silicon — NVIDIA Blackwell (B100/B200) clusters remain the gold standard. Amazon's Trainium 2, Google's TPU v5, and Meta's MTIA 2 handle growing portions of workloads.

- ▸Networking — 400G InfiniBand and ethernet scale-up fabrics. All four companies are deploying high-speed interconnects that let tens of thousands of GPUs train models together.

- ▸Data Centers — Each new hyperscale facility costs $1–8B. Construction timelines average 18–36 months from groundbreaking to live, meaning 2026 spend translates to live capacity in 2027–2028.

- ▸Energy — Nuclear power purchase agreements are now real and signed. Microsoft's deal with Constellation Energy and Amazon's nuclear investments are reshaping how AI companies think about long-term power security.

- ▸Cooling — Liquid cooling has replaced air cooling as the default for GPU clusters. It's more expensive to install but necessary for the power density of modern AI hardware.

The Questions That Haven't Been Answered Yet

Revenue is growing. But the honest read in May 2026 is that the spending still outpaces the returns. That's not unusual for infrastructure — roads and railways took decades to generate their economic value. The question is whether AI infrastructure follows that curve or a different one.

There's also the energy problem, which is getting harder to ignore. All four companies have net-zero commitments. All four are also signing nuclear and natural gas deals to keep the lights on in new data centers. Those two things are in tension, and the accounting for it — carbon credits, renewable energy certificates — is getting more scrutiny from regulators and investors in 2026 than it did a year ago.

And competition is evolving in ways that weren't visible 12 months ago. Chinese AI models trained on less hardware have caught up faster than the US industry expected. That puts some pressure on the assumption that raw compute spending is the primary moat. It turns out algorithmic efficiency matters as much as — maybe more than — GPU count.

What This Means If You're Not a Tech Giant

The practical takeaway for everyone outside these four companies is straightforward: better, cheaper AI tools are coming, faster than most people expect. When companies compete this hard for the same market, prices fall and capabilities improve. The API cost for running GPT-4-class intelligence has dropped over 90% since 2023. That trend continues.

If you're building software, the infrastructure being built right now will make AI integration cheaper and more reliable over the next 18 months. If you're a business user, the AI features embedded in tools you already pay for — Office, Google Workspace, Salesforce — will quietly get better without a price increase.

The less comfortable takeaway is that this spending is also training AI systems that will do knowledge work that humans currently do. That's not a conspiracy theory — it's what the companies themselves say they're building. The $700 billion is financing a replacement economy, not just a productivity boost. How fast that unfolds is still genuinely unclear, but the direction isn't.

The Honest Bottom Line for May 2026

The $700 billion is real. The returns are partial but growing. The timeline for full justification is probably 2028–2030. And the companies spending this money don't have a clean exit — they've publicly committed, hired the engineers, signed the construction contracts, and told investors this is the bet. There's no stepping back now.

What I think is clear from watching this closely: the companies getting the most out of AI infrastructure in 2026 are the ones that figured out the product before they built the factory. Microsoft had OpenAI. Meta had its own research. The lesson for everyone watching from the outside is that compute without a clear application is expensive storage. The winners in 2028 will be the ones that knew what they were building the infrastructure for.

Frequently Asked Questions

Updated for May 2026 — click any question to expand.