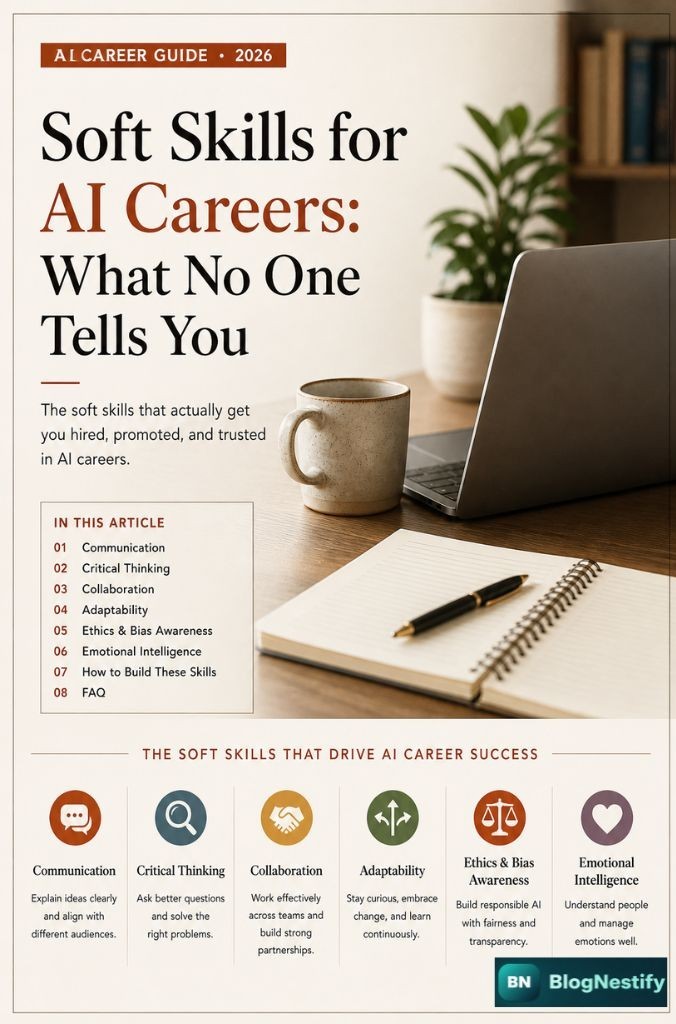

Soft Skills for AI Careers:

What No One Tells You

Every job posting lists Python, TensorFlow, and cloud platforms. What they don't list — but absolutely judge you on — are the skills that aren't on your GitHub.

You got the interview. You passed the LeetCode round. You can explain gradient descent at a whiteboard. And then you didn't get the job. Sound familiar?

Most people in AI know this feeling. The technical bar is high, sure — but it's not what actually separates people who thrive from people who plateau. After working in AI-adjacent roles and talking with dozens of engineers, data scientists, and ML leads, the pattern is pretty clear: the people who move up fast are not necessarily the ones with the best models. They're the ones who can explain what those models do, work well with others, and handle the messy, human side of AI work.

This isn't a list of buzzwords. These are actual skills that show up in code reviews, stakeholder meetings, failed deployments, and promotion conversations.

1Communication — The Real Interview Skill

If you can build a transformer from scratch but can't explain why it's the right tool for a given problem, you'll spend your career being underestimated. Communication in AI isn't about dumbing things down. It's about knowing who you're talking to and adjusting.

With a PM, you talk about timelines and tradeoffs. With an executive, you talk about business risk and cost. With a junior engineer, you explain architecture. With a client, you talk about outcomes. These are four completely different conversations about the same model.

The uncomfortable truth is that communication is often what gets someone a Senior title over someone equally skilled. Can you run a meeting without it going off the rails? Can you write a post-mortem that doesn't assign blame but still captures what went wrong? Can you push back on a bad request without making someone feel stupid? All of that is communication.

The ML engineer who explains their work clearly will always outlast the one who just ships code.

2Critical Thinking & Problem Framing

There's a specific failure mode in AI work where someone gets a task, builds a solution, ships it, and then discovers the task was the wrong one. Someone asked "can we predict churn?" and the answer was technically yes — but no one checked whether acting on that prediction would actually reduce churn.

Critical thinking in AI careers means questioning the problem before solving it. That includes asking: What's the actual goal here? What happens if the model is wrong? Who does this decision affect? Is this a prediction problem, or is it a behavior change problem?

Breaking large, vague goals into concrete, solvable sub-problems. This is different from writing code — it happens before that.

Precision vs. recall. Speed vs. accuracy. Privacy vs. personalization. Every AI decision involves tradeoffs most people never name explicitly.

The training data was "clean." The labels were "accurate." The production environment "matches" the test set. Critical thinkers check these claims.

Accuracy on a held-out test set is not a business metric. Understanding what "working" means to the people using the output matters enormously.

Critical thinking is also what protects you from scope creep. When someone says "while you're at it, can you also add X?" — you need to be able to evaluate that request on its merits, not just add it because you were asked.

3Cross-Functional Collaboration

AI projects don't exist in a silo. The data engineer needs clean pipelines before you can do anything. The product manager is deciding what gets prioritized. The legal team has opinions about what you can store. The sales team is promising capabilities to clients that may or may not exist yet. You work with all of them.

Collaboration in AI isn't just about being nice (though that helps). It's about understanding what other people actually need from you and what their constraints are. A data engineer who doesn't understand the downstream ML requirements will build pipelines that technically work but don't serve the model. An ML engineer who doesn't understand legal constraints will build features that never ship.

The best AI teams tend to have people who are genuinely curious about the work happening around them — not just their own slice. That curiosity is what makes cross-functional collaboration actually work rather than just existing in theory.

4Adaptability in a Fast-Moving Field

The tools change constantly. In the last five years, the standard toolkit for NLP went from bag-of-words to BERT to GPT-family models to fine-tuned open-source LLMs. If you attached your identity to one framework or approach, each of those shifts felt like a threat. If you're adaptable, they felt like opportunities.

Adaptability doesn't mean learning every new thing that drops on ArXiv. It means being comfortable with uncertainty, willing to unlearn things that no longer apply, and able to evaluate new tools without being attached to old ones.

This also applies to job roles. An ML engineer today might find that their role shifts toward LLM orchestration, prompt engineering, or AI product management. Being adaptable means staying curious about where things are headed rather than defending where they've been.

5Ethical Judgment & Bias Awareness

This one used to be optional. It isn't anymore. Regulatory pressure, public scrutiny, and a string of high-profile failures have made ethical judgment a practical requirement in AI careers — not just a philosophical nice-to-have.

Bias in AI systems doesn't usually come from someone deciding to build something harmful. It comes from training data that reflects historical inequities, evaluation metrics that don't capture real-world harm, and deployment contexts that nobody anticipated. Catching these things requires someone paying attention — and that someone is increasingly expected to be the ML engineer, not a separate ethics team.

| Skill Area | Technical Only | Technical + Ethical Judgment |

|---|---|---|

| Model Evaluation | Accuracy, F1 on test set | Checks for performance gaps across demographic subgroups |

| Data Collection | Maximize labeled examples | Asks who is represented and who is missing |

| Deployment Decision | Ship when metrics pass | Considers downstream impact on affected users |

| Feature Engineering | Best predictive features | Flags legally or ethically protected proxies |

Companies that have gotten into regulatory trouble didn't lack smart engineers. They lacked people who asked the right questions at the right time. That's a skill you can develop, and it has real career value.

6Emotional Intelligence

This is the one that gets the most eye-rolls in technical circles. And it's also the one that shows up most clearly in who gets promoted and who stays stuck.

Emotional intelligence in AI work isn't touchy-feely — it's practical. It's knowing how to deliver feedback on someone's code without making them defensive. It's recognizing when a stakeholder is anxious about a delay versus actually frustrated with the work. It's managing your own reaction when your model gets rejected after three months of development.

Where EQ Shows Up in AI Careers

A technically correct review that's delivered dismissively damages team cohesion. EQ doesn't mean going soft — it means being direct and respectful at the same time.

When a non-technical stakeholder challenges your model's predictions, the emotionally intelligent response isn't defensiveness — it's curiosity about what they're seeing that you aren't.

Models fail in production. Projects get cancelled. Priorities shift. How you respond to those moments is visible to everyone around you.

Most AI decisions require buy-in from people you don't manage. Getting that buy-in without being pushy or manipulative is EQ in action.

7How to Actually Build These Skills

Reading about soft skills is easy. Building them takes repetition in real contexts. A few things that actually work:

Write more than you think you need to. Blog posts, internal documentation, post-mortems, Slack messages explaining a decision — all of it sharpens communication. If you can't write it clearly, you don't understand it clearly yet.

Present to non-technical audiences regularly. Volunteer to present your work in all-hands meetings, not just technical reviews. The constraint of speaking to people who don't know the jargon is one of the fastest ways to develop clarity.

Join cross-functional projects. If your company has a project that involves product, design, or operations, find a way to be involved — even at the edges. The exposure is worth more than the task itself.

Ask for feedback on how you work, not just what you produce. Most performance reviews focus on outputs. Explicitly asking a manager or peer "how was it to work with me on this?" surfaces blind spots that outputs don't show.

Study AI ethics cases. COMPAS, Clearview AI, Amazon's recruiting tool, Google Photos' labeling errors — these aren't ancient history. Reading what went wrong and why trains your ethical judgment without requiring you to make the mistakes yourself.

Frequently Asked Questions

What soft skills are most important for an AI career?

Do soft skills matter in AI and machine learning jobs?

How can I improve my soft skills for an AI career?

Is emotional intelligence important in AI careers?

Can soft skills help me get hired in AI faster?

→The Short Version

Technical skills get you in the room. Soft skills determine what happens once you're there. The AI field is full of very skilled engineers who are underutilized because they can't communicate their work, struggle with ambiguity, or don't collaborate well. It's also full of people with slightly less polished technical chops who are doing extremely well because they do everything else well.

None of this is fate. These are learnable skills. Pick the weakest one on this list and spend real time on it. The return is usually faster than people expect — partly because not enough people in AI are working on this stuff.

Khushal writes about AI careers, technology trends, and the human side of working in tech at Blognestify. He focuses on the practical stuff that actually moves careers — not the hype. Based in India, writing for people building in AI everywhere.

0 Comments

Leave a Comment